Bridging the Gap - How We Made AI Agents 10x Developers in Our Organization

May 22, 2025 - 5 min read

The Problem: AI Agents Don’t Know Your Organization

AI coding assistants have revolutionized how we write code. They can generate functions, debug errors, and suggest optimizations in seconds. The promise is clear: become a 10x developer with AI as your co-pilot.

But here’s the reality check: the moment your AI agent needs to work with your organization’s internal infrastructure, the magic breaks down.

Your AI agent doesn’t know:

- Which design system your team uses

- How your CI/CD pipelines are structured

- What internal libraries you have for logging, metrics, and tracing

- Your organization’s coding standards and conventions

- How to manage your staging environments

- Which services exist and how they interact

- Your deployment workflows and requirements

Instead of a multiplier, you get a drag. Instead of a driver, you get a passenger asking for directions every few minutes.

This isn’t a frontend problem. It’s not specific to any one company. This is a universal challenge every organization faces when adopting AI development tools.

The Root Cause: Context is King

AI agents are incredibly powerful, but they’re only as good as the context they have. When working with public libraries and frameworks, they have access to extensive training data, documentation, and examples. But your internal tools? Your organizational standards? That’s a black box to them.

The traditional solution has been to manually paste documentation, explain architecture in chat, or let the AI fumble through trial and error. This works for simple tasks, but it doesn’t scale. It certainly doesn’t make you 10x more productive.

Our Solution: Teaching AI Agents About Our Organization

In our organization, we tackled this problem head-on for our frontend infrastructure. Our solution has three key components:

1. Comprehensive Documentation Layer

We created structured documentation that covers:

- Architecture docs: How our multi-service portal architecture works, request flows, and system design

- Conventions: Naming standards, coding patterns, and best practices

- Workflows: Translation pipelines, deployment checklists, and contribution guidelines

- Visual diagrams: Mermaid diagrams showing request flows, build pipelines, and deployment processes

This isn’t just README files scattered across repositories. It’s a curated, interconnected knowledge base designed specifically for AI consumption.

2. Living Project Catalog

We maintain a catalog of all our frontend infrastructure projects with rich metadata:

- Project types (services, libraries, templates)

- Technology stacks

- npm package names

- Internal dependencies

- Documentation links

- Ownership information

This gives AI agents a complete map of our ecosystem - what exists, how it’s connected, and where to find more information.

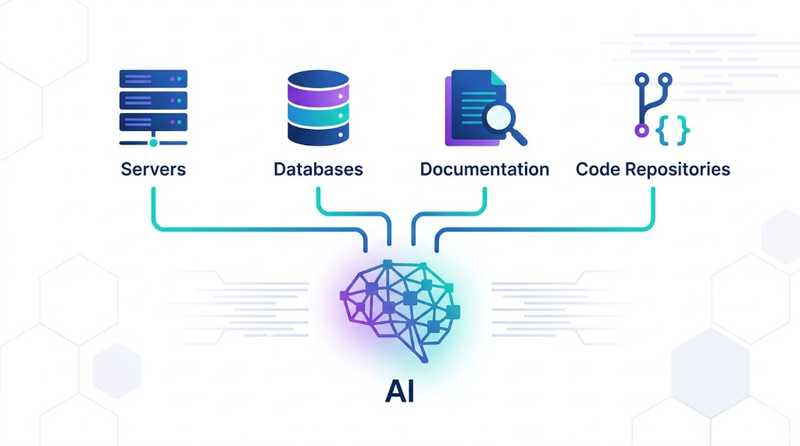

3. MCP Server: The Bridge Between AI and Infrastructure

The magic happens through a Model Context Protocol (MCP) server - a standardized way for AI agents to access external tools and data sources.

Our MCP server provides AI agents with specialized tools:

Discovery Tools:

list_projects: Browse all available frontend infrastructure projectsget_project_metadata: Get detailed information about specific projects including dependencies, tech stack, and documentation links

Documentation Tools:

get_architecture_docs: Fetch architecture documentation, diagrams, conventions, and workflowsget_documentation: Access project-specific documentation and guides

Source Code Tools:

list_project_files: Explore repository structure and file organizationget_project_files: Fetch source code from any project (with proper authentication)

Scaffolding Tools:

clone_template: Get instructions to scaffold new services from approved templates

All of this happens securely through Okta authentication, ensuring that AI agents only access what developers are authorized to see.

How It Works in Practice

Here’s a real-world scenario:

Developer: “Create a new frontend service that displays user analytics with charts”

Without MCP Server:

AI: I'll create a React app with Chart.js...

Developer: No, we use our internal design system

AI: Which design system?

Developer: Our component library

AI: How do I import it?

Developer: *pastes package name and import examples*

AI: How should I structure the service?

Developer: *explains our multi-service architecture*

AI: How do I deploy it?

Developer: *explains deployment pipeline, service registration, manifest files, etc.*With MCP Server:

AI: Let me check your frontend infrastructure...

[Calls list_projects to discover available tools]

[Calls get_architecture_docs to understand the system]

[Calls get_project_metadata to learn about the component library]

[Calls clone_template to get the service template]

I'll scaffold a new service using your standard template,

integrate with your component library, and set up

the deployment configuration following your conventions.The AI agent autonomously discovers what it needs to know, follows organizational standards, and produces production-ready code that fits seamlessly into your infrastructure.

The Impact

With this approach, organizations can achieve:

- Reduced onboarding time: New developers can start contributing faster because AI agents guide them through organizational standards

- Consistent code quality: AI agents follow conventions automatically

- Fewer production issues: AI agents know about critical deployment requirements that developers might miss

- Faster feature development: Developers spend less time explaining context and more time building features

The Bigger Picture: Every Organization Needs This

Our implementation is specific to our frontend infrastructure, but the pattern is universal. Every organization with internal tools, platforms, and standards faces this challenge.

The Model Context Protocol provides a standardized way to solve it. Whether you’re building:

- Backend services with internal frameworks

- Mobile apps with custom SDKs

- Infrastructure with proprietary tools

- Data pipelines with internal platforms

You need a way to teach AI agents about your organization’s unique context.

Key Takeaways

- AI agents are powerful, but context-blind: They need explicit access to your organizational knowledge

- Documentation alone isn’t enough: You need a structured, machine-readable way to expose information

- MCP worked for us: Model Context Protocol provided a proven pattern for extending AI agents - it might work for you too, or you might find a different implementation that fits your needs

- Security matters: Proper authentication ensures AI agents respect access controls

- Start small, iterate: Begin with your most critical pain points and expand over time

Getting Started

If you’re interested in building something similar for your organization, start by identifying real pain points:

- Test with real tasks: Ask your AI agent to perform common tasks in your organization - “Create a new service”, “Add authentication to this endpoint”, “Set up monitoring for this component”

- Identify missing knowledge: When the AI fails or produces incorrect code, note what organizational knowledge it’s missing - Is it the deployment process? Your internal libraries? Coding conventions?

- Add context manually first: Provide the missing information directly in the chat and verify the AI can complete the task successfully with that context

- Gather 2-3 use cases: Repeat this process for different scenarios to understand the pattern of what knowledge AI agents need most

- Build tools for those use cases: Once you have clear patterns, create MCP tools that provide that knowledge programmatically - start with tools that address your most common pain points

- Validate with original tasks: Test that your tools actually help the AI agent solve the tasks from your use cases without manual intervention

This approach ensures you’re building tools that solve real problems rather than creating infrastructure that might not get used.

The future of AI-assisted development isn’t just about smarter models - it’s about giving those models the right context to understand your organization. When you bridge that gap, AI agents truly become the 10x multiplier they promise to be.

Want to learn more about the Model Context Protocol? Check out modelcontextprotocol.io for the specification and examples.